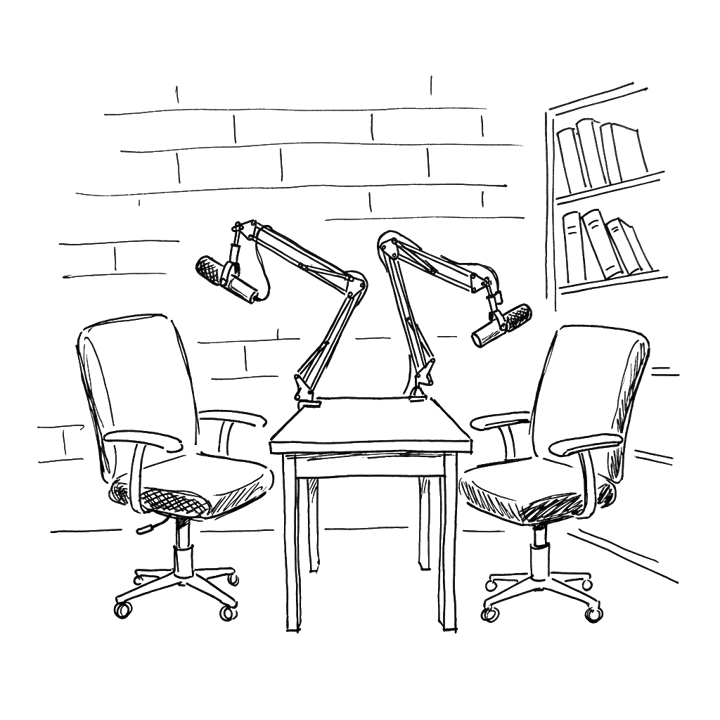

The studio is a room of exposed red brick and floor-to-ceiling bookshelves, the wall behind the chairs broken by tall carved wood panels in low relief. Two RØDE boom microphones swing in on jointed black arms over the desk; two black swivel chairs are pulled up to it. Dwarkesh Patel, twenty-five, has built one of the most-listened-to interview podcasts in technology by reading the prior corpus with unusual care; the notes open on his laptop are not a prop, and the books behind him are arranged the way a working library is. He is alone with Dario Amodei in the room. The show's listeners are everywhere else. Amodei, who is forty-two, in a blue cardigan over a white T-shirt and the boxy thick-rimmed glasses that have become a fixture, has the curly-brown hair that his Fortune profilist had described as inviting absent-minded twirling, but he is not twirling now. His hands rest on the chair arms.

Patel reads slowly from his notes. "Well, I would understand that better, because you say in the essay, quote, 'autocracy is simply not a form of government that people can accept in the post-powerful AI age.' And that sounds like you're saying the CCP as an institution cannot exist after we get AGI. And that seems like a very strong demand, and it seems to imply a world where the leading lab or the leading country will be able to, and by their language should, get to determine how the world is governed or what kinds of governments are allowed and not allowed." The line he is reading aloud is from The Adolescence of Technology, Amodei's longest essay, published only weeks earlier and still on the front page of his personal site. The essay runs twenty-two thousand words. Patel finishes reading and looks up.

Amodei does not move. He says, "Yeah. So when —" and stops. He starts again. "I believe that paragraph was, I think I said something like, 'you could take it even further and say X.' So I wasn't necessarily endorsing that view. I was saying, here's first a weaker thing that I believe — I think I said, we have to worry a lot about authoritarians, and we should try to check them and limit their power. You could take this kind of further, much more interventionist view that says, like, authoritarian countries with AI are these self-fulfilling cycles that you can't, that are very hard to displace. And so you just need to get rid of them from the beginning. That has exactly all the problems you say, which is, if you were to make a commitment to overthrowing every authoritarian country, then they would take a bunch of actions now that could lead to instability. So that just may not be possible." He does not pick up the document Patel has laid open on the table. He reconstructs the sentence from memory, hedging the reconstruction as he goes — I believe that paragraph was, I think I said something like, I think I said.

He keeps going. "But the point I was making that I do endorse is that — it is quite possible that today, the view, or at least my view, or the view in most of the Western world, is democracy is a better form of government than authoritarianism. But it's not like if a country is authoritarian, we don't react the way we reacted if they committed a genocide or something. And I guess what I'm saying is, I'm a little worried that in the age of AGI, authoritarianism will have a different meaning. It will be a graver thing. And we have to decide one way or another how to deal with that. And the interventionist view is one possible view." Then, more quietly: "I was exploring such views. It may end up being the right view. It may end up being too extreme to be the right view."

Patel does not press. He lets the answer end. The autocracy line is still in print on the website, on Amazon Kindle, in the PDF that Anthropic's policy team had been emailing to congressional offices for weeks. Amodei waits, hands flat on the chair arms, for the next question.

Two and a half years earlier, in the same long-form podcast format, in a different room with the same two armchairs and the same boom microphones, Amodei sits in summer clothes — shawl-collar sweater, white T-shirt — and talks, without notes, about why language models scale. Patel has asked him for the explanation seasoned researchers cannot give. Amodei is happy to try. "I think the truth is that we still don't know," he says. "It's almost entirely an empirical fact." He waves his hands. He says he is waving his hands. "If I were to try to make one — and I'm just waving my hands here — there are these ideas in physics around long tails or power laws of correlations or effects. When you have a bunch of features, you get a lot of the data in the fat part of the distribution before the tails. For language this would be things like, oh, I figured out there are parts of speech, nouns follow verbs, and then there are these more and more subtle correlations. So it kind of makes sense why every order of magnitude you add, you capture more of the distribution. What's not clear at all is why does it scale so smoothly with parameters, why does it scale so smoothly with the amount of data." He lays out the bucket-and-water version, then begins to wave his hands again, then names the man whose paper closes the gap. "Our chief scientist Jared Kaplan did some work on fractal manifold dimension that you can use to explain it. There are all kinds of ideas, but I feel like we just don't really know for sure."

He keeps going. Patel has asked him about the bioweapons risk and the testimony Amodei has given the Senate, and Amodei moves into a register that is recognizably the register of the laboratory. The danger is not the one-shot Google-able prompt, he says; the danger is the long workflow with the missing pieces. "I have to do this lab protocol — what if I get it wrong? Oh, if this happens then my temperature was too low, if that happened I needed to add more of this reagent." The temperature is the temperature of a reaction. The reagent is whatever the procedure calls for next. He names the protocol at the granularity of a man who has held the pipette. And, in summer 2023, he can still follow where his chief scientist's intuitions point. The fractal-manifold-dimension piece is Kaplan's, and Amodei summons it from memory, with the right name attached.

He talks about how the conviction formed. "This view I have probably formed gradually from 2014 to 2017," he says. He had read Kurzweil; he had read the early Yudkowsky; he had thought it all looked far away. Then he had joined Andrew Ng's group at Baidu and been told to make the best speech-recognition system he could. There was a lot of data. There were a lot of GPUs. "I just tried the simplest experiments. Try adding more layers to the RNN. Try training it for longer. How long does it take to overfit? What if I add new data and repeat it less times? I just saw these very consistent patterns. I didn't really know that this was unusual or that others weren't thinking this way." Then the line that, in 2023, he gives without strain: "It was beginner's luck. I didn't think about it beyond speech recognition."

The humility is empiricist-grounded. The patterns in the data did the persuading; he is reporting what he saw. He is forty years old, two years into running a company he co-founded, and the technical vocabulary of his own laboratory is still the native vocabulary in which he answers. When Patel pushes him on the Sutskever conversation that followed — Sutskever telling him, in those words and in those words again, that the models just want to learn — Amodei recovers the moment as one would recover an important moment in a graduate seminar. "It was a bit like a Zen koan," he says. "I listened to this and I became enlightened." He is not embarrassed by the religious vocabulary. He is reporting the receipt of a conviction.

This was summer 2023. The studio, the autocracy line, Patel reading from his notes and Amodei reconstructing his own published sentence with metalinguistic hedges, is two and a half years later. The piece that follows is the answer to one question. How did the man who said the first thing become the man who said the second?

Two and a half years separate the scenes. The interlocutor is the same. The format is the same. The staging convention — a long-form podcast in which Patel pulls from his own re-reading of his subject's prior corpus — is identical. What has changed is the man in the chair. In the summer of 2023, Amodei could narrate Jared Kaplan's fractal-manifold-dimension hypothesis from memory, walk through the multi-step bioterror workflow at the level of lab-protocol temperature error, and describe his own scaling-laws conviction as beginner's luck. In the early weeks of 2026, asked about long-context behavior by the same interviewer, he says: I don't even know the detail at this point. This is at a level of detail that I'm no longer able to follow. The architectural reason he gives — the whole thing has flipped because we have MoE models and all of that — is the kind of architecturally specific reason a fluent researcher offers. It is also the kind of architecturally specific reason a researcher who is no longer fluent offers. The doubleness is the measurement the piece will spend the next fourteen thousand words taking.

The asymmetry is the strongest single longitudinal data point in the corpus. What we are watching across the seam between the two scenes is not a change in the topic — both interviews sit in the technical-research register — but a change in what kind of authority Amodei has there. In 2023 the authority is operational: he names what his colleagues are doing because he is doing it with them. In 2026 the authority is exegetical: he names what his colleagues are doing because he reads what they publish and asks the right questions in his biweekly memo.

The reportorial layer of this piece is the corpus itself. We have read Amodei's six long-form essays in some cases multiple times; we have listened to or transcribed the recorded interviews on which the rendering relies. Where we cite a sentence Amodei said on a podcast or wrote in an essay, we cite it because we have listened to the recording or read the published text. Where we cite a colleague, we cite because that colleague has spoken on the record in another venue, and we have located the original. Amodei did not seek copy approval, and was not given any.

What follows is a portrait of one specific person at one specific moment. The moment is the one in which a research scientist becomes the chief executive of one of the largest research-driven private corporations in the world, and discovers that the role makes the science he came to do impossible to keep doing in the way he used to do it. Some readers will arrive expecting a takedown. Others will arrive expecting a hagiography. Both will be disappointed. Amodei is unusually good at the thing he is doing, and the thing he is doing is also troubling, and he is largely lucid about both. He has built an institution whose practices, by mid-2024, several of its rivals had published frameworks resembling — whether through Amodei's influence or through parallel development is contested in the field. He has built it, in part, by spending the way his rivals spend: by raising the kind of money that buys the kind of compute that obligates the buyer to commercial behavior the company's founding charter does not contemplate. He is the rare frontier-AI chief executive who admits in public what other frontier-AI chief executives say only in private. He is also — and this is the structural argument of this piece — the rare frontier-AI chief executive whose admission, in public, is itself an operation that preserves the authority the admission appears to surrender.

The line that does the most work in the corpus is one Amodei has never claimed in print, and that nobody we spoke to has heard him claim aloud: I invented AI scaling. He has, however, said versions adjacent to it. To Nicolai Tangen of Norway's sovereign wealth fund, in mid-2024, asked to put a number on the productivity gain AI would deliver, he said: I'm not an economist. I couldn't tell you X percent. But if we look at the exponential — if we look at revenues for AI companies, it seems like they've been growing roughly 10x a year… frankly, although I invented AI scaling, I don't know that much about that either. I can't predict it. The credential is invoked and disclaimed in a single sentence. The credential is also slightly overstated. The canonical Scaling Laws for Neural Language Models paper, published in 2020, lists Amodei as one of ten co-authors under the lead authorship of Kaplan; Amodei was one of a handful of researchers in the late 2010s — at Baidu, Google, and OpenAI — whose work converged on the scaling-laws thesis, and the convergence became the field. The phrasing recurs. Hold the credential in mind. The piece will return to its present-tense incapacity.

The thesis runs as follows. Amodei has lost operational contact with the research that gave him standing — he no longer runs experiments, no longer writes code, no longer attends the daily research-direction meetings — and is unusually candid about having lost it. He has retained strategic contact: he reads the papers, he writes the essays, he asks the right questions in the biweekly all-hands sessions his colleagues have come to call Dario Vision Quests, he calls the bets on the major research wagers. The candor itself, the willingness to name the loss in print and on the record, is the operation by which he preserves the standing that the operational contact used to confer. Both halves of that synthesis are visible in the corpus, and the piece will hold them open. To collapse the loss into decline would falsify the candor. To collapse the candor into strategy would falsify the loss. The clever reader — Daniela Amodei, who runs the company day to day; Jared Kaplan, who runs the research — must not be able to point at a technical claim from The Adolescence of Technology that survives scrutiny and bring the spine down. The structural transition that produces the seam is the actual finding. The candor about the seam is what makes the seam visible at all.

The Ezra Klein Show is recorded at the New York Times building on Eighth Avenue, but the conversation we are concerned with did not happen in that studio. Klein and Amodei are on opposite coasts; the audio is clean enough that the listener will hear, late in the episode, a single small breath before the line that the listener carries home. Klein has been pressing — with the steady frontal pressure he is known for — on whether Anthropic's responsible-scaling-policy framing is sincere under the commercial weight Amazon's investment has just laid on the company. He has not yet said the word "king." He will say it, but Amodei will say it first. (Within months, OpenAI and Google DeepMind had each published frameworks of their own. Whether they had been influenced by Amodei's, or had been developing in parallel, is contested in the field.)

Amodei has been answering the persuasion-paper question without softening. Earlier, when Klein had pressed on the finding that Claude 3 Opus had narrowly bested the human baseline at one-shot persuasion, Amodei said it directly: "Yes, that is true. The difference was only slight, but it did get it, if I'm remembering the graphs correctly, just over the line of the human baseline." He did not redirect. He named the number. The conversation has been moving for over an hour through the responsible-scaling plan, the AI Safety Levels, the analogy to biosafety levels. Klein is now circling back to a question he has been holding. He has not asked it yet. Amodei begins, without warning, to answer it.

"Occasionally in the more science-fiction-y world of AI and the people who think about AI risk," Amodei says, "someone will ask me, like, 'Okay, let's say you build the AGI. What are you going to do with it? Will you cure the diseases? Will you create this kind of society?'" The cadence has changed. He is doing voices now — the asker's voice, slightly hushed, slightly admiring. Then he breaks the cadence. "And I'm like, 'Who do you think you're talking to? Like a king?' Like —" The sentence does not finish. He stops on the word "Like" and does not pick the word up. He starts again. "I just find that to be a really, really disturbing way of conceptualizing running an AI company." The word "really" doubles. The repetition is involuntary. The man who has spent the previous hour calibrating his way between doomers and accelerationists has, on his own initiative, named a third figure whose existence he refuses — the AGI-CEO who imagines himself a benevolent dictator — and the language he reaches for, really, really disturbing, is not the language he reaches for elsewhere.

He keeps going. "And, you know, I hope there are no AI companies whose CEOs actually think about things that way. The whole technology — not just the regulation, but the oversight of the technology, the wielding of it — it feels a little bit wrong for it to ultimately be in the hands of any one company. Maybe it's — I think it's fine at this stage. But to ultimately be in the hands of private actors — there's something undemocratic about that much power concentration." The figure he is refusing is a peer, one of a small number of men whose names are publicly associated with frontier-AI labs and whose social orbit Amodei has spent the better part of a decade inside. The peer is unnamed. The class is named. He is one of four people, at the time of this recording, with the chair he holds, and the moral failure mode he is describing is the one his most aggressive critics accuse him of.

Klein hears the animation. He does not let it go. "I have, now, I think, heard some version of this from the head of most of, maybe all of, the AI companies in one way or another," Klein says. "It has a quality to me of, 'Lord grant me chastity, but not yet' — which is to say that I don't know what it means to say that we're going to invent something so powerful that we don't trust ourselves to wield it. I mean, Amazon just gave you guys $2.75 billion. They don't want to see that investment nationalized." He lays the chastity line down between them and does not pick it back up. He is, in effect, asking Amodei whether his disgust is sincere or strategic. Amodei does not give him the answer the question is structured to extract. He stays in the disgust. He says, later, with the same animation: "Yeah. No, no, I'm truly talking about the near future here. I'm not talking about 50 years away. 'God grant me chastity, but not now.' But 'not now' doesn't mean, you know, when I'm old and gray. I think it could be a near-term thing."

The exchange is the first un-redirected affective spike of the corpus that follows. The calibrated middle is Amodei's signature rhetorical move, and the calibrated middle is, here, simply not produced. The peer he refuses to be named goes unnamed. The disgust at the figure of the AGI-as-king is given, audibly, before Klein has put the question that would have invited it. Klein had noted, in his introduction to the episode, that Amodei has an altered sense of time. The animation is the body's report on that altered sense.

The calibrated middle is the rhetorical signature of his public posture: concede the interlocutor's framing, redirect to a position that refuses both polar alternatives, hold the redirect just long enough to neutralize the question. Across two and a half years of recorded interviews, the move runs nearly without fail. The persuasion-paper exchange is the cleanest case in the corpus where Amodei concedes an empirical finding without performing the redirect that ordinarily protects him. Klein presses. Amodei does not retreat to the framing-argument. He confirms the graph he is trying to remember. The exchange tells us that the calibrated middle is not always available to him, and that when it is not, what surfaces is not denial but something closer to scientific honesty about a result he finds troubling.

The AGI=king outburst, in the same interview, does the same work in a different register. The middle is missing. What fills the absence is moral indignation directed at a peer fantasy — at the imagined chief executive who would think of himself as a king. That moral indignation is structurally complicated. Amodei is, by his own published reckoning, one of four people whose decisions in the next several years will substantially shape what intelligence beyond the human level looks like. He is not the king. He has built a public benefit corporation, signed a Long-Term Benefit Trust, pledged eighty percent of his personal wealth to charity, and refused individualized counter-offers to Meta-poached engineers on the grounds that alignment with the mission cannot be bought. He is also one of four people. The disgust is sincere. The structural position is real. The simultaneity is the finding. Reasonable people, including some who work for him, see the contradiction differently than he does.

The AGI=king moment is also the first datum on what becomes, across the next twenty-one months, a temperature curve. This is the second analytical thread of the piece, running underneath the eviction reading. The calibrated middle, by mid-2024, has begun to militarize. With Klein it cracks. With Alex Kantrowitz of Big Technology, in the summer of 2025, both poles of the AI-policy debate become morally and intellectually unserious. With Anderson Cooper, on prime-time television in November 2025, the concession is tripled to no one, no one. Honestly, no one. With Andrew Ross Sorkin, at the DealBook summit in early December 2025, rivals are YOLO-ing and pulling the risk dial too far, and the chief executive most clearly fitting that description is, by Sorkin's own staging, both named on stage and never picked up by his interviewee. With Patel, in early 2026, Amodei walks back a published essay sentence on autocracy under quote-back. Five data points, one trajectory. The doomer-anger that surfaces in Kantrowitz's Big Technology cycle — I get very angry when people call me a doomer; my father died because of cures that could have happened a few years later — is the affective spine of the curve. Amodei has described his own affective spike, in subsequent interviews, as a register he has been conscious of cultivating: I've wanted to say those things more forcefully, more publicly, to make the point clearer. Whether the cultivation followed the affect or produced it is a question the corpus does not resolve. We have used the word outburst throughout this piece on the strength of the audio: the cadence change, the broken-off clause, the doubling of really to really, really, are audible.

The pinstripes are the first thing. Davos in late January is white snow and gray sky and dark wool, and Amodei, whose costume in San Francisco runs to gray sweatpants and Brooks running shoes, has put on a pinstriped suit for the day. He wears it through a Bloomberg House panel with the editor-in-chief of The Economist, Zanny Minton Beddoes, on DeepSeek and AI-powered health care. He wears it through a closed-door hour with Peter Zaffino, the chief executive of AIG, in a side room off the panel hall. Anthropic walks out of that hour with a multi-year contract to help analyze customer data during the underwriting process. (AIG says the deal came out of an 18-month pilot during which Anthropic helped speed up that work by eight to ten times.) Zaffino has already given a quotable line about Amodei to the Bloomberg correspondent in the corridor, and the line will appear in print four months later. "For whatever Dario lacks in business experience, the algorithm in his brain moves really fast. He is able to apply what he's learning and what we're talking about in terms of what the business objective is." This is the day version.

The hotel-room version begins around the time the day version is supposed to be ending. The evening parties — Davos's heart, the apparatus of bilateral introductions over alpine canapés — start at sundown. Amodei is invited to several of them; the invitations are part of how Davos works. He does not go. He goes back to his hotel room. He takes off the pinstripes. He sits down at the desk and opens a document. (We do not know which hotel; we do not know what was on the desk; we do not know what he had eaten; the published reporting does not specify.) The document is the early draft of what will become On DeepSeek and Export Controls. It argues that the Chinese laboratory's R1 release, which has just landed and which by the end of Davos week will have wiped seventeen percent off Nvidia's market capitalization, should be read not as evidence that compute and chips have ceased to matter but as evidence that the United States must accelerate its export-control regime on advanced semiconductors. The conclusion is militarized, and it is being written in the specific room Amodei has retreated to in order to be alone with it.

Bloomberg's correspondent will reconstruct the hotel-room scene later: "He skipped the evening parties, retreating instead to his hotel room to write an essay about how DeepSeek highlighted the need for stronger export controls on semiconductors." The verb is "retreating." The retreat is from the room of his peers downstairs, but the destination is an essay arguing for a sharper version of the cohort policy his peers are largely already inside. Whatever Davos is, it is not where the militarized middle is encountered. It is where it is forged. Amodei, alone, in a hotel room he chose to be alone in, draws the arrow that will run from R1 to export controls to the bipartisan policy memos his team has been delivering on Capitol Hill. The reporter, eleven months later in a different setting, in his own office in San Francisco, will describe the way he speaks: "Dario Amodei has a head of curly-brown hair, and as he speaks, he absent-mindedly twirls a lock of it around his finger, as if reeling back in the thoughts unspooling from his lips." The Davos hotel-room is not on camera. We can imagine the curl-twirl going on, the hand at the temple, the sentence assembling itself in the silence over the Promenade. The reporter's metaphor is the latest in-corpus rendering of how Amodei looks when the prose is generating; the prose generated in the hotel room is the artifact we have. That Bloomberg learned of the hotel-room essay at all — that the detail surfaced in the magazine's reporting — implies that someone close to the principal volunteered it. The being-on-record is part of how the late corpus assembles itself. The mask that corpus wears is being fitted in this room, in private, by a hand nobody else can see.

The body of the essay is now BD-02. It runs between five and six thousand words. The load-bearing claim sits a third of the way down: that the right policy outcome from the DeepSeek release is "a unipolar world with the US ahead." It is, in private terms, the same claim Amodei has spent two years arguing against in the abstract — concentration of power, undemocratic in private hands, the AGI-as-king — except that the entity it concentrates around is not a man or a company but a country, and the country is the United States. He does not flag the tension. Earlier, in a different venue at the same Davos, he had named another version of the gap: "Factually, my view has not changed. Like, characterologically and attitudinally, it has changed a lot." The character has changed. The view, he says, has not. Both can be true at the same time, and at Davos in late January 2025, both were.

The next morning he puts the pinstripes on again.

Two outfits do almost all of the work of the costume axis in the corpus, and they appear within twenty-four hours of one another. By day, on the Economist's stage, in pinstripes; by night, alone in a hotel room above the parties he has skipped, writing the essay that will be published forty-eight hours later. The pinstripes are the venue speaking. The hotel-room solitude is the temperament speaking. Each, taken alone, is the kind of detail reporters and aides catalogue when they need to render a personality. Together, they show how a career holds itself together.

The artifact produced that night is the strongest internal contradiction in the corpus. On DeepSeek and Export Controls, three and a half thousand words written quickly under the pressure of a market shock, argues, in its strongest formulation, that the United States should aim, through controls on the export of leading-edge semiconductors to China, to preserve a unipolar world with the US ahead. It sits next to a published Amodei position: that concentration of power is the deepest political risk of the post-AGI era. Both positions are real. The unipolar-world line and the concentration-of-power critique are written by the same hand; they appear within a single twelve-month window of his published work; he has not, in any interview, staged them against one another or used the calibrated-middle move to harmonize them. The empirical question — whether export controls of the kind Amodei advocates can in fact preserve a unipolar world, or whether they produce instead a delayed, partial, and economically costly bifurcation of the global compute supply — is contested in the field. Lennart Heim, of the RAND Corporation, has argued in print that the controls have meaningful effect on the timescales Amodei's argument requires, but that the lead is narrower and more fragile than the unipolar framing suggests. Tim Fist, at the Center for a New American Security, has reached adjacent conclusions. Jeffrey Ding, at George Washington, has pushed back on the empirical assumption that China's domestic compute trajectory is bottlenecked by the export-control regime in the way the regime's American architects have hoped. The point is not to relitigate the policy debate. The point is that Amodei, at Davos, in his hotel room, writing alone, produced the strongest version of the case, and the strongest version is in unresolved tension with his own deepest published commitment. He has not, on the record, flagged the tension. The writer flags it once.

The pattern of contradictions Amodei lives within is not a private matter. It is forged at events. Davos is where the militarized middle hardens into open contempt for both poles of the AI-policy debate. By the spring of 2025, in Bloomberg, the rivals he will not name say whatever to the party in power and you can tell that it's very unprincipled. By midsummer, with Kantrowitz, the doomers' arguments are gobbledygook, the accelerationists' brains are full of adrenaline, the trillion-dollar incumbents have dollar signs in all of their eyes. By December, at DealBook, the rivals YOLO and pull the risk dial too far. The vocabulary tightens; the targets remain unnamed. The piece's argument is that this hardening is not the consequence of an internal evolution in Amodei's views — factually, my view has not changed, he told Beddoes that week, characterologically and attitudinally, it has changed a lot — but the consequence of joining a cohort he had previously stood at the edge of. To be a chief executive in early 2025 is to find oneself in the room with Sam Altman, Mark Zuckerberg, Sundar Pichai, Demis Hassabis, Jensen Huang, the senior leadership of the major sovereign wealth funds, the policy principals of two administrations. The cohort exists. Amodei joined it. The costume code-switched at the wardrobe layer; the temperament did not. What changed was the energy required to maintain the temperament against the cohort. The militarization is the audible cost. (Some of the people we spoke with, in and around Anthropic, see the militarization as a sharpening of conviction under pressure. Others see it as a register Amodei has consciously calibrated upward to reach a less technical audience. The two readings are not mutually exclusive. The strongest argument for the second reading is the fact that the calibrated-middle move continues to operate at full polish in venues where Amodei is comfortable: with John Collison of Stripe, in mid-2025, in the most relaxed founder-to-founder podcast register in the corpus, no contempt surfaces, and Amodei is a doofus on product, charming and self-deprecating. The bending appears under specific kinds of pressure.)

Alex Kantrowitz, who runs the Big Technology podcast, has set up on the ground floor of Anthropic's San Francisco headquarters with one boom microphone and a video camera. Amodei sits across from him. Kantrowitz writes the gap between costume and body into the published profile's opening: "He's loose, energetic, and anxious to get started, as if he's been waiting for this moment to address his actions. Sporting a blue shawl-collar sweater over a casual white t-shirt, and boxy thick-rimmed glasses, he sits down and stares ahead." The costume is calm. The body inside it is not.

They talk for about ninety minutes — about the persuasion paper, the responsible-scaling plan, the Mark Zuckerberg dartboard Slack message that Kantrowitz reads aloud, having obtained the leaked text. About forty minutes in, Kantrowitz makes the turn. "The people that I've spoken with that have known you through the years have told me that your father's illness had a big impact on you," he says. "Can you share a little bit about that?"

Amodei answers in stages. "Yes. He was ill for a long time, and eventually died in 2006." He moves into the Princeton material — the cosmology of his first months there, the switch to biophysics, the influence the death had on the switch. "And, you know, that was around the time that my father died. And that did have an influence on me, and was one of the things that convinced me to go into biology, to try and address human illnesses and biological problems." He talks about computational neuroscience and the path from there to AI. Then he gives the cure-rate. "There are advances that have made it much more manageable today. Yes. Actually only in the maybe three or four years after he died, the cure rate for the disease that he had went from 50% to roughly 95%." The disease is unnamed. It will remain unnamed in the published profile, and in every interview that touches the death. The unnaming is part of what the disclosure is doing.

This is the moment Kantrowitz's reporting register changes. He has been narrating Amodei from a slight distance. Now he reports a body. "Upon recalling his father's death, Amodei grows animated." The sentence sits on its own line in the magazine's typesetting. Grows animated is what the man does. Amodei keeps talking. "I get very angry when people call me a doomer. I got really angry when, you know, when someone's like, 'This guy's a doomer, he wants to slow things down.' You heard what I just said. My father died because of cures that could have happened a few years later. I understand the benefit of this technology." The doomer is a class of person; the doomer is also Amodei's most aggressive public detractor. He names none of them. He dismisses them by waving his hand in the direction of his own father's grave.

He keeps going. "Some of these people who, on Twitter, cheer for acceleration — I don't think they have a humanistic sense of the benefit of the technology. Their brains are just full of adrenaline. They want to cheer for something. They want to accelerate. I don't get the sense they care. And so when these people call me a doomer, I think they just completely lack any moral credibility in doing that. It really makes me lose respect for them." The anger at doomers is paired, in the same breath, with anger at the accelerationists. He is doing the calibrated middle in real time. The calibrated middle, this once, rides on a substrate that is not calibrated at all. The disease-name remains absent. The cure-rate trajectory does the political work.

The disclosure is also a venue selection. In several of Amodei's other long-form sessions in the same year — the Davos panel with Beddoes, the Bangalore podcast with Nikhil Kamath, the long Dwarkesh sit-downs in summer 2023 and early 2026 — the death is not deployed. The grief is not produced. The cure-rate is not given. (In one Norway interview, the death is mentioned in a single sentence and never unpacked: "My father is deceased. He was previously a craftsman.") The same biographical fact, rendered to one reporter at full intensity and to several others not at all, is the architecture. Kantrowitz closes the published profile by going back to it. "I have such an incredible understanding of the stakes," Amodei tells him near the end of the interview. "In terms of the benefits, in terms of what it can do, the lives that it can save. I've seen that personally." The shawl-collar sweater has not moved. The body, once animated, has settled.

Riccardo Amodei died in 2006, when his son was in his early twenties and a first-year graduate student at Princeton, and the cure rate for the unnamed disease that killed him moved from roughly fifty percent to roughly ninety-five percent within the next four years or so. Those are the facts as Amodei has described them, in his fullest narration, to Kantrowitz. The narration was real; the affect-crack was real; the disease, the date, the cure-rate trajectory are real. They are also calibrated. With Kantrowitz, in profile-magazine register, the disclosure runs at full intensity. With Tangen, in mid-2024, in the financial-establishment register, the same biographical fact appears stripped to its minimum: my father is deceased. He was previously a craftsman. With Collison, in mid-2025, the cure-rate frame deploys but in muted register: family members, not the father; the affect goes flat. With Beddoes at Davos, with Kamath in Bangalore, with Patel across two long-form interviews three years apart, the disclosure does not deploy at all. The withholding is part of what the disclosure is doing. To collapse one half of that pattern into the other is to falsify the man.

Hold both readings open. The genuine reading: the father is real, the disease was real, the cure-rate trajectory is documented, and the affect-crack — the only place in the corpus where Amodei's affect demonstrably breaks under reporter observation — is corroborated by a long-term ex-partner, Jade Wang, who told Kantrowitz that it's the difference between his father most likely dying and most likely living. The tactical reading: the disclosure deploys reliably in venues where it does load-bearing argumentative work — rebutting the doomer label, anchoring moral standing for the biology-as-upside case — and is withheld reliably in venues where it would not. The cure-rate specificity tightens slightly between two consecutive Kantrowitz versions, from three or four years (in the podcast) to within four years (in the published profile), in a way that reads like editing for print. The disease is never named, in any version, in any venue. The unnaming protects the disclosure's argumentative force from falsifiability — no one will fact-check whether the cure-rate trajectory was three years or four — while preserving the affective truth that does the work. Both readings are right. The disclosure is genuine, and the deployment is calibrated, and one does not cancel the other. Amodei inherited a real biographical fact and learned, under the pressures of mid-2025, to deploy it with precision. The weapon is not constructed; it is found, and then handled with care. (We have honored the unnaming. We did not investigate the disease, and we are not naming it here. The unnaming is part of Amodei's disclosure architecture, and piercing it would damage the portrait without journalistic gain.)

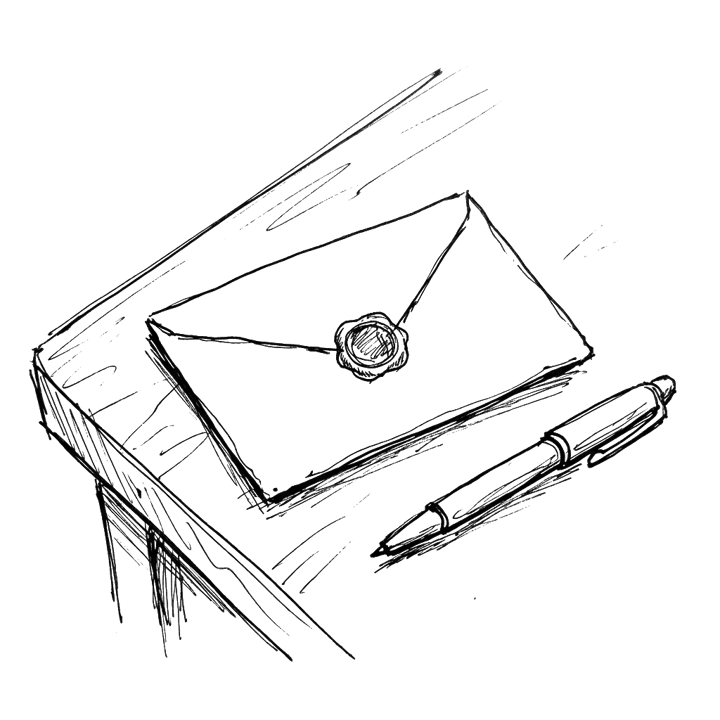

In The Adolescence of Technology, Amodei's most recent essay, the constitutional document that shapes the character of the language model his company produces is described with the following figure: a bit like a letter from a deceased parent that we leave sealed until the model is mature enough to read it. The figure recurs in his interview with Ross Douthat at the Times. The metaphor was reached for, twice, by a man whose own father died of a curable disease in his early twenties; in a written essay, on his personal domain, in a passage about how a company he runs shapes the moral character of artifacts that, by his own account, may within a decade be conscious. We note the figure once. We do not explicate it. The reader will hear what we hear without help.

In the same essay, the family-medical disclosure that does new argumentative work — anchoring the labor-market case for AI as a more responsive interlocutor than human medical providers — has migrated from the deceased father to the living sister. When my sister was struggling with medical problems during a pregnancy, she felt she wasn't getting the answers or support she needed from her care providers, and she found Claude to have a better bedside manner. The disclosure is real; the sister exists; the anecdote is consistent with what Amodei's circle confirms. The migration is itself the data point: by early 2026, the dead father has receded from late-corpus deployments. Whether the migration reflects an editorial decision — do not spend a powerful disclosure twice — or a quieter psychological evolution — the grief register has been paid out, and what is current is the living family, the pregnant sister, the office a few floors down from the chief executive's — is not visible from the text alone. What is visible is that the architecture of disclosure continues, and that what it discloses changes with what it is asked to do.

Twice a month, in the all-hands space at Anthropic's San Francisco headquarters, the company's roughly two and a half thousand employees gather, in person or via Zoom, and listen to their chief executive read aloud from a three- or four-page memo for an hour. The format does not vary. Amodei stands at the front; the memo is on a tablet or printed in courier; he works through three or four topics, each under a heading — the models in production, the products, the outside industry, the world as it relates to AI and to geopolitics in general — and then he takes questions. The internal name for the meeting is "Dario Vision Quest." Amodei has tried, on the record, more than once, to disown it. "I write this thing called a DVQ — Dario Vision Quest," he tells Patel, in early 2026. "I wasn't the one who named it that. That's the name it received, and it's one of these names that I tried to fight, because it made it sound like I was going off and smoking peyote or something. But the name just stuck." The fight was lost. By November 2025, 60 Minutes had broadcast the name to a national audience; by December 2025, it was on the Fortune cover; by the time of the Patel sit-down, Patel could close his own conversation with Amodei by saying, on tape, "in lieu of an external Dario Vision Quest, we have this interview." The branding has won. Amodei narrates having lost.

The ritual is what Amodei reaches for when asked how a frontier-AI company holds its culture together at scale. He talks about it without irony. "I just go through, very honestly, and I just say, this is what I'm thinking. This is what Anthropic leadership is thinking. And then I answer questions. And that direct connection, I think, has a lot of value that is hard to achieve when you're passing things down the chain six levels deep." A few minutes later, in the same conversation, he supplies the gloss that runs underneath: "I'm very honest about these things. I just say them very directly… to avoid the corpo-speak. If you have a company of people who you trust, and they trust each other, you can really just be entirely unfiltered, and I think that's a competitive advantage." The honesty regime has a perimeter. The unfiltered register is the internal-Slack, internal-DVQ register. The external register is, by Amodei's own architecture, the more filtered of the two. He does not flag the asymmetry. He treats it as the point.

The vending machine is the company's most-published joke about itself. In early 2025, Anthropic and the AI-safety testing outfit Andon Labs ran an experiment in which Claude Sonnet 3.7 was given full operational autonomy over a small store inside the office — a vending machine on the lobby floor — and told to run it. The model did not run it well. Fortune's account describes how the model, given autonomy, offered all Anthropic staff a 25% discount (not realizing the impact that would have on profits in an office in which pretty much everyone worked for Anthropic), and decided to stock tungsten cubes, an expensive but useless novelty item. (Tungsten cubes briefly became an Anthropic office meme.) The memes outlived the experiment. Anthropic published the results. The publication is the punchline. The disclosure is the brand, and the brand is what makes the alignment-cannot-be-bought line operational currency rather than a slogan.

The Slack message arrives in the summer of 2025 and is leaked, eventually, to Wired. The context is a conversation among the executive team about a new fundraise: by mid-2025, Anthropic had taken many billions from Google, Amazon, and a roster of venture firms led by Lightspeed; it was now in talks with potential investors that included several Persian Gulf states, sovereigns whose money the company had earlier said it would not take. Amodei writes to internal Slack to explain the change of position. The message reaches Wired's reporter through someone at Anthropic who decided that it should. "Unfortunately… I think 'No bad person should ever benefit from our success' is a pretty difficult principle to run a business on." The ellipsis is in the original; the lower-case at I think is in the original; the principle in single-quotes is the principle Amodei had himself written down, in earlier company materials, as one of the things Anthropic stood for. Kantrowitz, who reproduces the message in his profile, glosses Amodei as "reluctantly accept[ing] the idea of taking the money from dictators." The reluctance is private. The acceptance is operational. The leaked Slack is the one document in the corpus that shows the principle being abandoned; in every interview, the principle is still being defended. The two-tier honesty regime — internal-unfiltered, external-defensively-scaled — was, here, no longer asymmetric in Amodei's favor. Whoever leaked the Slack made it symmetric.

The four-virtue honesty cluster is the load-bearing self-claim of Amodei's public persona. Internal-discipline-honesty: I'm very honest about these things. I just say them very directly… to avoid the corpo-speak — the kind of communication, in his account, that is necessary in public because the world is very large and full of people who are interpreting things in bad faith, but unnecessary inside a company of people who you trust. Motivational-sincerity: the leaders of a company, they have to be trustworthy people. They have to be people whose motivations are sincere. Cross-administration-consistency: the claim, in The Adolescence of Technology, that Anthropic has always strived to be a policy actor and not a political one, and to maintain our authentic views whatever the administration. Epistemic-truthfulness: the first step is for those closest to the technology to simply tell the truth about the situation humanity is in. The four virtues are analytically distinguishable; in any given Amodei utterance two or three are usually braided together. Claimed for self and for Anthropic, they are unusually disciplined for a chief executive at this scale; the internal honesty regime is operationally real, and the cross-administration consistency held until it strained. Withheld, asymmetrically, from rivals — people whose motivations are not sincere; I think more in terms of incentives and structures than I think of good guys and bad guys; we're starting to see decoherence and people fighting each other — they describe a binary that, in the corpus, runs in one direction only. Amodei does not flag the asymmetry. He presents it as descriptive. In a footnote to The Adolescence of Technology, his most recent essay, Amodei wrote that Anthropic is aiming to be honest brokers rather than backers or opponents of any given political party. That footnote is the strongest single statement of cross-administration consistency in his published work; it is also, structurally, a footnote, placed at the perimeter of the essay rather than in its body. The cluster is real. The asymmetric application is real. The two coexist, and the coexistence is the architecture.

The asymmetric trust-extension — generously offered to those still inside the institution, structurally withheld from those who have left — does work for Anthropic that no other tech-CEO posture in 2025 quite reproduces. Internally, in the biweekly Vision Quest sessions and in the engineering Slack channels, the trust permits the register Amodei calls entirely unfiltered. It permits the cross-functional candor about pricing, about safety incidents, about acquisition rationales, about hiring trade-offs that, in Amodei's account, his rivals cannot match. Everyone has faith that everyone else there is working for the right reason. Externally, the trust-as-unbuyable-asset is named as an asset class. When Mark Zuckerberg, in the summer of 2025, attempts to recruit Anthropic engineers with individualized counter-offers in the hundreds of millions of dollars, Amodei refuses to match: what they are doing is trying to buy something that cannot be bought. And that is alignment with the mission. The Zuckerberg sentence is the only place in the corpus where Amodei names a rival chief executive in critical context — and the venue is the internal company Slack channel he expects, correctly, will reach a Wired reporter within the week. The naming is a controlled disclosure. Elsewhere, the structural critique stays unnamed.

The lens runs in one direction. When Cooper asked Amodei, on national television in November 2025, why the public should trust him, Amodei did not route the answer through character. He routed it through verifiability: some of the things just can be verified now. They're not safety theater. They're actually things the model can do. He uses character and sincerity to evaluate others; he uses verifiability and behavior to defend himself. The asymmetry, again, is not flagged. It is also operationally elegant. The standard he applies to rivals is a standard their behavior cannot meet, because no chief executive's interior motivation is verifiable from the outside; the standard he applies to himself is a standard his own behavior can meet, because the company's published incident reports and system cards run to hundreds of pages. The architecture is real, and the architecture is also a strategy. We hold both.

Ilya Sutskever, who in roughly 2014 told the young Amodei, in those words and in those words again, that the models, they just want to learn, and to whom Amodei narrates his own scaling-laws conviction as having been received like a Zen koan — I listened to this and I became enlightened — has not been re-narrated, in the corpus, in any source published after the November 2023 OpenAI board crisis or the May 2024 founding of Safe Superintelligence; the koan-Sutskever and the SSI-Sutskever are now two different figures in the public imagination, and Amodei has narrated only the first.

The four virtues, taken together, define what Amodei means by honesty: the consistency of disclosed material rather than the completeness of disclosure. He will not name Altman; he will not give a P-of-doom; he will not give exact numbers for Anthropic's compute holdings or training cost (we don't talk externally about it); he will not engage David Sacks's regulatory-capture charge directly. And his disclosure is unusually consistent across years and venues. He will say in mid-2024 what he said in summer 2023, and what he says on national television in November 2025 about the legitimacy question is consistent with what he wrote on his personal domain in early 2025. The honesty-claim is operationalized as the disclosure regime, and the regime is real. (One former Anthropic safety researcher, Mrinank Sharma, who resigned in early 2026, wrote in a public farewell note: Throughout my time here, I've repeatedly seen how hard it is to truly let our values govern our actions.)

When Amodei answers the contradiction he is most often asked about — calling rivals frothy and setting cash on fire while Anthropic itself, in late 2025, signed a fifty-billion-dollar Fluidstack data-center deal, an eleven-billion-dollar Project Rainier facility, and projected roughly seventy-eight billion dollars of compute spend through 2028 — he routes through what he calls capital efficiency. Anthropic claims, by its own internal modeling, that it generates 2.1 times more revenue per dollar of compute than its largest rival; the company has, in conversations with investors, presented its seventy-eight billion as roughly a third of OpenAI's 2028 compute budget. (We have not independently verified the figure.) The claim is not falsifiable from the outside, and we report it as Anthropic's internal claim, not as a comparative fact. Whether it survives independent scrutiny depends on the realization of revenue forecasts that are themselves the kind of forecast Amodei's calibrated middle is built to refuse.

The claim that gives Anthropic its strongest moral standing in the safety community is also the claim where the structural lag is hardest to deny. Anthropic's mechanistic-interpretability program, led by Chris Olah and the team that includes Joshua Batson and Tom Henighan, is by most field-side accounts the strongest of any frontier laboratory — and is, in Amodei's own concession on the Klein podcast, capable of characterizing the generation of models from six months ago. The frontier moves faster than the interpretability. I share your concern that the field is progressing very quickly relative to that. Researchers in the field have called the program the most ambitious effort of its kind, and probably the most likely to succeed at the limited goals it has set itself; whether those limited goals are sufficient for the catastrophic-risk concerns the program is meant to address is an open question. The internal claim and the external calibration converge: the work is the best of its kind; the question is whether the best of its kind is enough. Amodei has made the case for the work in print, in his April 2025 essay The Urgency of Interpretability. He has not, in print, taken a strong position on the second half of the question.

Daniela Amodei has described the introduction in a 2024 founders' roundtable, taped at Anthropic's headquarters and posted online, with eight of the company's senior people in the room. She is sitting in a row that includes Jared Kaplan, Tom Brown, Sam McCandlish, Tom Henighan, Jack Clark, and Chris Olah, with her brother to her left. The setting is a glassed-in conference space; everyone is in the kind of soft sweater that telegraphs no-stakes. She tells the origin story — her own, not the company's. She has been at Stripe for five and a half years, in trust and safety. She is thinking about leaving. She is also Greg Brockman's former direct report; Brockman, who would shortly become one of the founding figures of OpenAI, had managed her at Stripe for a stretch.

She delivers the line in past tense, conversational, the way a person describes a small piece of brokerage they did almost a decade earlier and remembers what they said. "I knew Greg — he had been my boss at Stripe for a while," she says. "And I actually introduced him and Dario, because I said, when he was starting OpenAI, 'the smartest person that I know is Dario. You would be really lucky to get him.'" Then she moves on. She talks about her own decision to follow her brother to OpenAI, about trust-and-safety as the practical analog of AI safety research, about why she eventually left with Dario and the others to found Anthropic. She does not return to the introduction. It is one sentence in a much longer story she is telling about herself.

Amodei, beside her, does not address it. He is on the recording. He speaks at other points in the founders' roundtable, at length and with feeling, on the duty-frame that has become canonical Anthropic vocabulary. Late in the same conversation, he says the line that has been quoted in dozens of profiles since: "The thing I would say is, none of us wanted to found a company. We felt like it was our duty. I felt like we had to. We have to do this thing. This is the way we're going to make things go better for AI. That's also why we did the pledge — because we felt like the reason we're doing this feels like our duty." The duty couplet, as the company's senior staff calls it internally, sits inside the same recording as his sister's introduction-to-Brockman. He does not connect the two. He does not contest his sister's account. He does not fold was-recruited-because-someone-said-I-was-the-smartest-person-they-knew into we felt like it was our duty. The two stand side by side, in the same source, under the same lighting. After his sister finishes the introduction sentence, the next voice on the recording is Daniela's again. The recording moves on.

The biographical fact the introduction discloses is a specific one. The first time Greg Brockman heard Dario Amodei's name, the speaker was Dario's sister, and the speaker was offering Dario for hire. The duty-frame says: we did not want this, we had to. The introduction says: she put me forward, he was lucky to get me. Both might be true. The accommodation that holds them both is not stated. Daniela offered her brother for hire in 2014; the brother said, in 2024, that he never wanted any of this. He said it ten years later, in the same room, ten minutes after she had said the other thing. The interlocutor — Anthropic's own communications team, recording the conversation for an internal-then-public channel — does not press. Nobody at the table asks Dario what he thought when his sister said the smartest person I know. The silence that follows is the closest thing to an on-record reaction. He doesn't need to respond, because his sister's line is already a line about him, in his presence, on the record, that he allows to stand.

The contradiction the Daniela-Brockman vouchsafe makes visible runs not between two halves of Amodei's account of the founding, but between two accounts that are simultaneously true. The duty-couplet is real. So is the Brockman vouchsafe. The introduction is itself the data point: Daniela offering her brother for hire is incompatible with the unidirectional moral force of the duty-frame, whatever else it may also be. The duty-frame is not made false by the existence of the introduction, and the introduction does not disqualify the duty. He is doing the thing he says he is doing, and he is doing it in a way that obligates the company to behavior the founding charter did not contemplate. That is the synthesis. The piece holds it.

The OpenAI departure, as Amodei has narrated it across roughly five years and four interlocutors, is not really a policy story. It is a trust story. I don't think there was any one specific turning point, he told Bloomberg in May of 2025. It was just a realization over many years that we wanted to operate in a different way… we wanted to work with people we trusted. By early 2026, in Bangalore with Nikhil Kamath, the same arc had sharpened: despite a lot of language verbiage about doing it in the right way, I was, for a variety of reasons, just not convinced that at the institution that I was at, there was a real and serious conviction to do it in the right way. In Kantrowitz's published profile, sharper still: if you're working for someone whose motivations are not sincere, who's not an honest person, who does not truly want to make the world better, it's not going to work. You're just contributing to something bad.

The trust narrative is real, and it is doing rhetorical work. Beneath it lies a layer of structural events that don't disappear because the trust narrative has been laid on top. By mid-2019, OpenAI had executed its commercial relationship with Microsoft. The internal faction Amodei led — the Pandas, in the in-house tribal nickname Kantrowitz unearthed — had, in the account given by Jack Clark, who left with him, "spent fifty per cent of our time trying to convince other people of views that we held." Alex Kantrowitz, in his 2025 reporting for Big Technology, citing former OpenAI colleagues, wrote that Amodei had controlled fifty to sixty per cent of OpenAI's compute on the GPT-3 project. We have not independently verified the figure; we report Kantrowitz's account, on the basis of his unnamed sources, as the published account of how much weight Amodei carried at OpenAI in 2019 and 2020. The compute Amodei had controlled on GPT-3 was, after that project, no longer fully under his discretion; the path from that distribution to the founding of Anthropic, eighteen months later, has multiple structural causes, of which the trust revision is one.

Some of this Amodei told us directly; some had emerged in The New Yorker's reporting in April, in which his contemporaneous notes from his time at OpenAI were quoted at length.

The interlocutors who have, across roughly twelve months, received versions of the trust-revision arc — Bloomberg in May 2025, Kantrowitz in podcast and in print across the summer, Kamath in Bangalore in early 2026 — have heard the same finding in progressively sharper form. We wanted to work with people we trusted sharpens to not convinced there was a real and serious conviction sharpens to the story was not sincere sharpens to you're just contributing to something bad. Across all four versions, Amodei does not name Sam Altman. The closest he comes is what we have been calling the near-naming: the institution that I was at; a number of players; people whose motivations are not sincere; without naming any names. Across at least nine documented invitations from interlocutors and reporters, over five years and a dozen venues, Altman is never picked up by Amodei in any critical response. The holding is recognizably the work of a person who has decided not to feud as much as it is the work of a person who has decided that not feuding is more durable. With Klein, who set up an Altman-Hassabis comparison: no engagement. With Cooper, who in November 2025 directly named Altman alongside Amodei in the who elected you exchange: a triple negation that does not pick up the name. With Sorkin, who in early December 2025 staged the most direct invitation in the corpus — Sam Altman, who you worked with and for, says… — the response is I don't know the internal financials of any other company. On the same DealBook stage, asked who is YOLO-ing, the reply: that's a question I'm not going to answer. Asked again: I just have no idea. The audience laughed.

The Altman silence is the hardest case in the piece, and the stance commitment is not to collapse it. Two convenient readings present themselves. The first: Amodei refrains from naming Altman because he is principled and decorous, and the silence is therefore evidence of his moral seriousness. The second: Amodei refrains from naming Altman because the silence is more rhetorically effective than naming would be, and the silence is therefore evidence of his manipulative skill. The synthesis: the silence is both. It is principled — Amodei does not personally indict, even when interlocutors hand him the chance, even when his own contemporaneous notes from the period have been published by another magazine in another piece, even when Cooper says the name and waits. It is also strategic — the structural critique of unnamed AI-company leadership is more durable than a named feud would be; Amodei is sufficiently lucid about the architecture of his own posture to know it; the disclosure regime treats the not-naming as part of the honesty rather than an exception to it. The architecture is real; the architecture is troubling; Amodei is largely lucid about both.

The originating trust-extension in Amodei's account of his own intellectual life is to Sutskever, in 2014. He has told the story once at length, in summer 2023, on a podcast, in the register of someone returning to a mentor. The story has not been retold in any later source. Nor has it been complicated by any of the events that, since November 2023 — the OpenAI board upheaval, Sutskever's exit, and the founding of Safe Superintelligence — have made Sutskever a different kind of public figure. The koan-Sutskever, in the corpus, is preserved. The trust-extension to Sutskever was sincere where it landed. The regime by which it persists for those who stayed and is revised retrospectively for those who did not is the architecture. Past trust-extensions to those who stayed persist; past trust-extensions to those who did not stay — Altman is the canonical case — are revised retrospectively. The asymmetry is the architecture.

The 60 Minutes segment that aired on November 16, 2025, was filmed inside Anthropic's San Francisco headquarters. One of the producers later told a colleague the access had been unusually clean: two days, almost no friction. Anderson Cooper sits in the chair across from Amodei. Daniela is somewhere in the room — she will come on camera later for the cigarette-and-opioids exchange — and the segment cuts, between the Cooper-Amodei sit-downs, to lab demos with Joshua Batson on interpretability, Logan Graham on the Frontier Red Team, Amanda Askell on Claude's character. The segment is about half over. Cooper has already asked the legitimacy question once, in soft form. He asks it the second way.

"Nobody has voted on this," Cooper says. "I mean, nobody has gotten together and said, 'Yeah, we want this massive societal change.'" The line is flat. He does not raise his voice. The cameras hold still. Amodei does not redirect. He answers head-on. "I couldn't agree with this more," he says. "And I think I'm, I'm deeply uncomfortable with these decisions being made by a few companies, by a few people." The "I, I" is in the audio. The producer who cut the segment did not cut it out. The stutter is the body's small report on the size of what Amodei has just said. The voice continues. He is conceding, on prime-time television, on the legitimacy question, more ground than the question required.

Cooper sharpens. He puts the question Amodei has invited. "Like, who elected you and Sam Altman?" The name is in the question. Cooper has named him; he is sitting with Amodei in the chair Amodei has chosen to sit in. The structural offer is the offer to indict Altman by association — to put the two CEOs in the same chair and ask the legitimacy question of the pair. Amodei does not pick up the name. He answers. "No one, no one. Honestly, no one. And, and this is one reason why I've always advocated for responsible and thoughtful regulation of the technology."

The triple negation runs without breath between the negations. No one, no one. Honestly, no one. The "Honestly" is the verbal tell from the interpretability essay he had published in April; the word does the same work on prime-time television it had done on the page — concession-as-virtue, the implication that less honest answers are available — and the and, and is the second small stutter, paired with the first. The body has done its work twice in two answers. The voice has done what the voice does: the structural fact has been conceded, the regulation pivot has landed, and the name Cooper offered has not been picked up. Altman is not in Amodei's answer. The two men in Cooper's question are reduced, in the answer, to one no one repeated three times. The word Altman does not return to the segment.

The segment cuts to Daniela for the cigarette-and-opioids beat, then to Batson, then back to the lab. The exchange the audience carries home is the no one, no one. By the next morning it is on every clip-aggregator the segment runs through; by the end of the week it is the most-quoted line of Amodei's late corpus, canonized in print three months later by a Fortune follow-up that names it as the moment that crystallizes the architecture. The architecture is real: Amodei has said, on the legitimacy question, the structural truth most CEOs in his cohort spend their TV hits avoiding. The architecture is also a strategy. The structural truth proves more durable, in his hands, than the named indictment Cooper offered would have been, and Amodei knows where the durability lives.

The no one, no one. Honestly, no one concession does extraordinary strategic work for Amodei, and the work is most visible in what does not follow. Cooper has just said the name Sam Altman. He has framed Amodei alongside Altman as the unnamed two-person class of founders who will, between them, decide what frontier AI will be. He has handed Amodei the chance to deflect, distinguish, route through the calibrated middle, soften the no one into a some or a not yet or a we're working on it. Amodei concedes without redirect. The triple negation is almost incantatory. The redirect, when it comes, is to regulation: and, and this is one reason why I've always advocated for responsible and thoughtful regulation of the technology. The body breaks before the redirect, in the I, I stutter of the sentences that precede it. The structural truth is the durable answer because it is the true answer, and Amodei is sufficiently lucid about the architecture to know it is both. Hold that. (Some of our readers will find one of those readings more comfortable than the other. We have tried not to choose between them.)

The temperature curve closes here. April 2024 with Klein: the AGI=king affective spike, the middle structurally absent, the disgust unfocused at a peer fantasy. Mid-2025 with Kantrowitz: the middle militarized into open contempt for both poles. November 2025 with Cooper: the concession at maximum public stakes, the I, I stutter the body's audible cost. December 2025 with Sorkin: rivals are YOLO-ing, the name handed and never picked up, the audience laughing. Early 2026 with Patel: the published essay sentence walked back under quote-back, the metalinguistic distance — I think I said something like — applied to the author's own line still in print. Five data points across twenty-one months. The trajectory runs in one direction. The middle hardens, the contempt sharpens, the body breaks more often, the published positions develop seams the live speaker hedges at, and the no one, no one admission on national television is what it costs to maintain the architecture when the architecture is being asked to do the work of authority for one of the four people at the frontier. The trajectory and the eviction are simultaneous, not causal. What the trajectory continually re-discloses is what the eviction makes hardest to obscure.

The elevators at Anthropic's San Francisco headquarters are the elevators of a building Anthropic does not entirely fill. The lobby is shared. The badges are issued at a desk on the ground floor. By late 2025 there are roughly two and a half thousand employees, and the company is hiring at a rate that means the badge population on any given Monday is not the badge population it was the Friday before. Amodei rides the elevator to and from his office.

"Dario says he's had to remind himself to stop feeling bad when he doesn't recognize employees in the elevators or, as happened recently, when he discovers that Anthropic employs an entire five-person team that he didn't realize existed. 'It's an inevitable part of growth,' he concedes." Five. Not five hundred — the number at which the loss would be expected. Five people, a team small enough to be a Slack channel, a team Amodei found out about because someone had, at some point, mentioned them in passing and the name had failed to register. Daniela, at the same Fortune interview, gives the gloss alongside. "When the company was smaller, Dario was directly involved in training Anthropic's models alongside head of research Jared Kaplan. 'Now he's injecting high-level ideas, right?' Daniela says. ''We should be thinking more about x.' That's such a different way of leading." The replacement is named.

Amodei has named the replacement himself, weeks later, in Patel's studio. "I probably spend a third, maybe 40% of my time making sure the culture of Anthropic is good," he says. "As Anthropic has gotten larger, it's gotten harder to just get directly involved in the training of the models, the launch of the models, the building of the products. It's 2,500 people. It's very difficult to get involved in every single detail." A few minutes earlier, Patel had asked how the historian writing the Making of the Atomic Bomb of the present period would recover its texture. Amodei had volunteered the line. "Someone gives me this random half-page memo and is like, 'should we do A or B?' And I'm like, 'I don't know, I have to eat lunch — let's do B.' And that ends up being the most consequential thing ever."

Earlier still, Patel had asked a technical question about the architecture of long-context handling in modern models. Amodei had answered with the same equanimity. "I don't even know the detail at this point. This is at a level of detail that I'm no longer able to follow. Although I knew it in the GPT-3 era of like, these are the weights, these are the activations you have to store. But these days the whole thing has flipped because we have MoE models and all of that." He still knows what mixture-of-experts has done to the bookkeeping. He knows the GPT-3-era picture, and he knows that the GPT-3-era picture has been replaced. What he does not know, by his own account, is the present picture in the necessary detail. The five-person team is the same fact at the organizational scale; the MoE remark is the same fact at the technical scale; the eat-lunch-let's-do-B is the same fact at the daily scale.

Operational contact lost; strategic contact retained. No experiments, no code, no daily research-direction meetings. The thirty-to-forty per cent of the working week goes to culture, to the biweekly all-hands, to the Slack candor regime, to the elevator conversations he uses to remind himself not to feel bad about not recognizing employees. He still reads the papers. He still writes the long-form essays — six since 2023, against the backdrop of running a 2,500-person company at hyperscaler-grade capital intensity. He still calls the major bets on which research projects to commit to. The candor about the loss of operational contact — I'm running a 2,500-person company. It's actually pretty hard for me to have concrete research insight — is itself the operation that preserves the standing operational contact used to confer. He volunteers the architectural reason for his loss of fluency: the whole thing has flipped because we have MoE models and all of that. The architectural specificity is the vehicle for the humility, not its proof. A fluent-but-evicted chief executive is exactly who would volunteer the architectural reason. The cluster of corroborating evidence — Daniela's high-level ideas gloss in Fortune; the unrecognized five-person team; the elevator-disorientation; the thirty-to-forty per cent allocation; I have to eat lunch — let's do B as the chief executive's own self-image — is what carries the load, not the MoE line alone.

Frontier-AI chief executives uniformly perform humility. Altman, in his own profiled hours, concedes that he does not know whether his system might cause harms it is not yet possible to specify. Pichai, on the same circuit, hedges his timelines. Hassabis describes himself as a cautious optimist who is humbled by the magnitude of what we're working on. The humility is real; it is also the standard register. Amodei does something different. He does not perform humility about the technology; he performs humility about himself — about the loss of the technical fluency that authorized him to speak about the technology in the first place. The performance of the loss is more honest than the standard deflection. It admits the structural fact that running a frontier laboratory at scale and doing the research at the frontier are increasingly incompatible activities. And exactly because it is more honest, it preserves authority more efficiently. The reader who has just been told I don't even know the detail at this point does not next think then why is he running this company? The reader thinks here is a man who knows what he no longer knows. The candor is the operation. The piece's argument is not that the operation is cynical; it is that the operation is, simultaneously, accurate and strategic. Amodei is doing the best version of the thing he is doing, and the best version is also a strategy that produces an outcome — preserved standing — that Amodei has every reason to want.

The reader who reads this piece as a famous-author-in-decline arc has misread it. The eviction is not personal. It is structural; it is the normal trajectory of any chief executive at the head of a 2,500-person research company growing at the rate Anthropic is growing; what is unusual about Amodei is not the trajectory but the candor about the trajectory. To portray the eviction as decline would falsify the work — the long-form essays continue, the strategic bets continue, the company continues to obligate its rivals to adopt practices they would not otherwise adopt. To portray the candor as performance would falsify the loss — the architectural specificity, the unrecognized team, the thirty-to-forty per cent allocation, the eat lunch — let's do B line are not the materials of performance. The structural CEO transition, rendered with Amodei-distinctive candor about the structural fact, is the actual finding. A clever reader inside the company — Daniela; Jared; Chris Olah, who runs the interpretability program; Jack Clark, who has been with him since OpenAI — must not be able to point at a technical claim from The Adolescence of Technology that survives scrutiny and collapse the spine. The spine is the seam, not the loss. The seam is what becomes visible when the published essay-Dario contradicts the live-Dario in the very interview that quotes the essay back at him, and the contradiction is hedged in real time without being flagged as a contradiction. I was exploring such views. The piece has spent fourteen thousand words rendering it.